August

01

August

01

Tags

Whats post-human about the web?

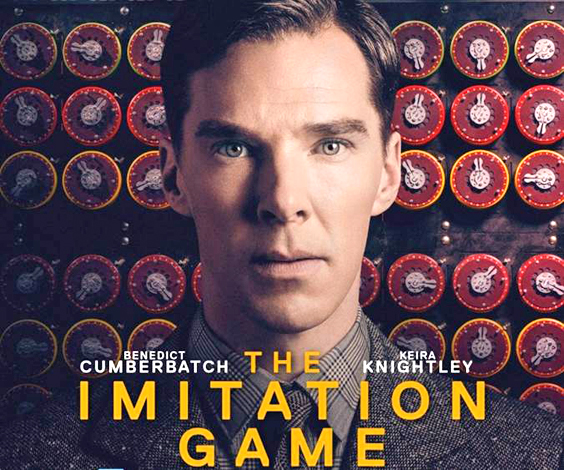

The history of the digital age was launched when Alan Turing built the first computer in the 1940’s at the UK National Physics Laboratory. The story of how he did this is told in the acclaimed film The Imitation Game (2014). In the time since his pioneering work, computers have evolved from large mainframes, to internet-connected desktops and now to mobile processing technology available in the user’s personal environment.

Handheld, implantable, wearable, even paintable and other malleable processing technology allows web connectivity, multi-media, locative media and a variety of other computational functions to be always available to users. Thus ubiquitous computing, a term coined by Mark Weiser in 1993, is technology that is everywhere, all the time, and it is often called “silent” and “calm” because of the way it can unobtrusively permeate all facets of our lives.

One of the major challenges of ubicomp relates to the way that digital technology can obscure the boundaries between what we know as ‘human’ and ‘non-human’. With augmentation by different technologies and wireless connectivity, the human body could become indistinguishable from the cyborgs portrayed in futuristic films like Bladerunner. There are many ethical issues to be faced as we enter this next phase of human and post-human evolution.

The future will certainly be very interesting as technology develops. The latest news tonight showed in Japan a human robot – seems like C3PO will be with us shortly!!

LikeLike